Intro to Progressive Analytics #3: Metrics & Delivery

Progressive Analytics is a framework that strives to achieve value from analytics quickly and sustainably. It is designed on principles of agile and lean, tailored to the modern data architecture, and born from real-world experience delivering analytics platforms.

One thing a solid framework helps with is when things get off track. Without a delivery framework that is modular and incremental, it is difficult to point precisely to where things are going wrong.

This post gets to the question: what we are building? Earlier posts focused on why are we doing this? and where and how are we getting our data?

It is way too common and easy to jump from a whiteboard or requirements session into implementation. Although data architecture is often planned well, operations does not get as much attention. Worse, metrics management often never gets any attention, nor does a plan for things like report or dashboard governance

How many times have your audience members questioned the implementation of a metric (e.g. “I thought we were going to exclude canceled memberships in this number?”) or lost track of where to find answers (“Where was that dashboard again?”).

From my experience, too little time is spent defining a clear plan for delivery and management of metrics. This layer of Progressive Analytics can be iterative too. Start lightweight and as traction and size of the analytics program grows, the delivery and metrics management can grow as well.

Delivery

Delivery refers to how we deliver analytics to our Audiences. This is the “last mile” in delivering analytics to people who need them. Lots to think about in here–but again, this can be iterative. I list some things below that we discuss and think about. Some iterations this is a 5 minute discussion, other times it takes longer.

Data Products

Originally we called these delivery vehicles. A vehicle is “a medium through which something is expressed, achieved, or displayed.” I liked the analogy of the mail or delivery truck taking a package the last mile to the final destination. Although Data Product does not have a consistent definition, the industry seems to be converging on this term.

Examples of Data Products include:

- Internal dashboards

- Customer-facing dashboards embedded in a SaaS app or web site

- Datasets surfaced in a data catalog as part of a data mesh

- Independent, embedded visuals in a mobile or SaaS app

- Direct database connections for statistical tools, or R or Python connections

- Downloads into spreadsheets for analysts

- Data apps in Streamlit or Plotly Dash

- Downstream data pipelines for other teams or companies to consume

Defining Audiences is a prerequisite and input into defining Data Products.

Prioritization

Data Products are often implied and very clear to certain people driving the effort, but easily misunderstood to the broader set of stakeholders. Even simple parameters–like whether a dashboard is internal or external–can easily be assumed by someone too close to the work. Clear and explicit discussions help align all stakeholders.

If your plan calls for multiple Data Products, prioritize and draw a line to limit the amount of work in progress. Dealing with a single or limited set of Data Products at a time in any one iteration will increase delivery velocity.

Governance

Much could be said about report and dashboard governance, and much has been said, and I will not attempt to cover everything here. But data governance often receives much more attention than report governance.

Defining clear owners of each Data Product is the first step to make sure they stay accurate and maintained.

Defining Audiences and Questions as an input to this usually makes security and permissions of Data Products straightforward.

Lifecycle

Versioning dashboards, datasets, data models etc is not common in analytics platforms–but could be and brings a lot of value when needed.

Software development and product management have strong concepts of lifecycle–i.e. design, development, test, version, deploy, rollback.Not so much when it comes to Data Products. I expect this will change over time as data product management matures.

How will you make updates to a dashboard that is live and used by dozens or hundreds of people each day? That plan might be different compared with a quarterly PDF report that gets manually emailed to the board of directors.

Metrics

Surprisingly, Metrics management is a missing piece of modern data platforms. This is not a problem until you get past the first few iterations, when it becomes impossible to remember all the metrics’ definitions and implementations.

Metrics are the atomic unit of analytics. The business doesn’t care to think in terms of quarks or gluons. They want to answer questions through analysis of metrics, trends on those metrics, and combinations of those metrics.

All too often, metrics are implemented in a BI tool. This is a bad practice, because any other Data Product either has to be based on that BI tool, or you must re-implement the metric logic in multiple locations. This is a recipe for inconsistent metrics, leading to the Audiences losing trust in the data platform as a whole. This goes against all the reasons we have centralized data warehouses in the first place.

Regardless of where they are implemented, metrics need a name, a business definition, a technical definition, and an owner. We track these in a simple spreadsheet, but it is so easy to let it gather dust. So, in conversations with Audience members or analysts about metrics definitions, we refer to the spreadsheet. In conversations with engineers about technical implementations, we refer to the spreadsheet.

Working through disputes to get to correct, consistently defined metrics is essential to the work we do in creating data platforms. Impasses in these disputes mean either 1) we are actually referring to two different metrics, or 2) that the business needs to get consistent on terminology and operations.

We are considering versioning metrics definitions, and giving them a simple lifecycle like proposed, in progress, and delivered. We have not acted on this yet, but it would help clear up confusion as metrics evolve. Startups like Transform and Supergrain are attempting to address this, but they are very early. I have high hopes!

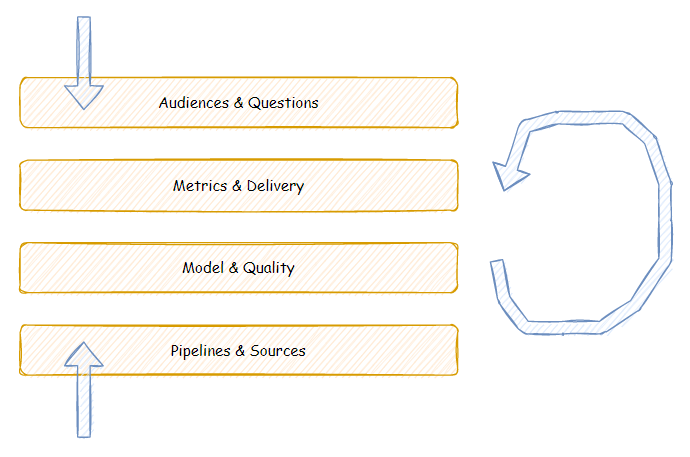

See how the Delivery & Metrics layer fits into the broader Progressive Analytics framework by exploring these related articles:

- Audiences & Questions

- Delivery & Metrics (this article)

- Model & Quality (coming soon)

- Sources & Pipelines

Photo by Irina Shishkina on Unsplash